When to use Feast & ClearML in your MLOps lifecycle

TLDR; these tools (feast & clearML) are great additions if you’re company already has ML models running in production and further wants to improve the speed of deploying new ones

Part 1: What is Feast & ClearML and when do I use it in a MLOps lifecycle?

Often new tools pop up that promise to make it easier or better to manage your ML models in production. For good reason because managing ML models in production is hard.

Two upcoming tools are Feast (feature store) & ClearML (experiment tool). Feast promises to be the fastest path to operationalising analytic data for model training. And ClearML promises to easily develop, orchestrate, and automate ML workflows at scale.

Do these tools live up to their promise? And does that mean you should start installing these tools right away? In this 2 part blog post series, we’ll look at what it is whether you need it (part 1) and how to install and use it (part 2). Enjoy!

Background MLOps

The process of deploying and maintaining ML models in production is hard. Multiple stakeholders are involved which leads to complexity in defining responsibilities, managing expectations, define success metrics.

The goal of a good MLOps setup is to design a workflow that allows to experiment, deploy and iterate on solutions in a fast and durable way. Some important design principles are:

- Create autonomy for data scientists: minimise handovers and shared responsibilities between teams.

- Focus on improving the time to production KPI

- No black box machine learning application in production: everyone should be able to understand Machine Learning workloads.

- Use best-of-breed tooling when possible (no one data platform solves all).

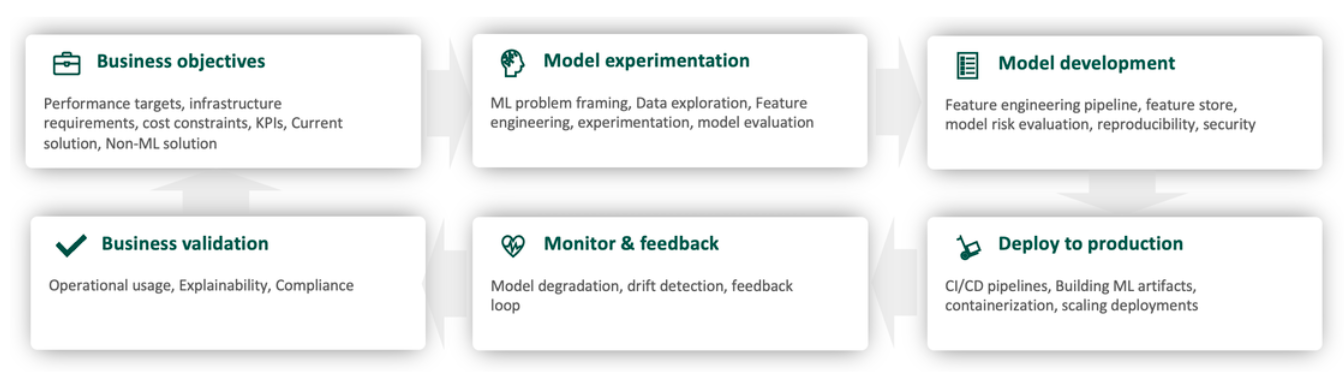

The field of MLOps helps to streamline this process and at Enjins we see 6 important phases for the development and deployment of ML models

Figure 1: 6 phases of the MLOps lifecycle

Each of these phases comes with different tools and practices to help you to deal with these challenges. I’d recommend looking around at mlops.toys to see other great MLOps projects. Right now, we’ll focus on the model experimentation & development part.

Feast & Clear ML

As a part of our training program for all internal employees, we gave hands-on training about working with the tools Feast & ClearML. These tools solve the problems

- Transparency: see historical experiments to compare model results

- Reproducibility: version control all the metadata of ML models to reproduce the predictions

- Efficiency: no duplication of feature calculation across models.

Feast is an operational system for managing and serving machine learning features to models in production. It can serve features from a low-latency online store (for real-time prediction) or an offline store (for batch scoring). See Introduction

ClearML is an open-source tool that automates the preparation, execution, and analysis of machine learning experiments. it provides a UI for tracking experiments that data scientists can use to collectively see their training history and perform hyperparameter optimisation. See What is ClearML? | ClearML

When to use feast & ClearML in MLOps lifecycle?

These tools come with powerful features but also add complexity to your infrastructure and can lead to additional overhead. I prefer the KISS method here (Keep it simple stupid), So here is an overview based on our experience on when to use them and when not to use them

When to use Feast

- When you have a big data team (20+) and are sharing features a lot across ML models

- If you have many duplicate (50+) ETL pipelines which lead to high compute costs

- You need predictions instantly with latencies <200ms

- When you have a lot of computing intense feature engineering pipelines

- Your models mainly use timestamped rows and combining data sources requires a lot of point-in-time joins

When not to use Feast

- When you are just starting to bring ML models into production, feature store will be overkill, start with a good monitoring setup

- Your features are not time-dependent

- Your features are computationally inexpensive

- Having a small data team (<5) so sharing of features is not needed

When to use ClearML

- You have an ML model running in production and want to improve it

- Models need frequent retraining ( daily or weekly, often with time series)

- Multiple data scientists work on the same ML model

- You want to set up a retraining pipeline based on model performance metrics

When not to use ClearML

- When you just started experimenting with ML and do not have ML models running in production

- You haven’t set up a model monitoring feedback loop yet which should be your first priority

- If you want to use the open source version and are not able to manage the underlying Infrastructure (K8s or EC2)

- You are already using alternatives such as MLFlow and are happy with that

- When you are using the R language for your ML models

I hope this gives you a bit of background about the tools! In part 2 I will continue with the installation and configuration setup.

Please fill in your e-mail and we'll update you when we have new content!

Follow us on LinkedIn!

Follow us on LinkedIn! Check out our Meetup group!

Check out our Meetup group!